Acknowledgements The Committee acknowledges with gratitude the role of Deputy Governor Shri.H.R.Khan in supporting and guiding the Committee. The Committee gratefully acknowledges the detailed guidance and encouragement by the Executive Director Shri.G.Padmanabhan and for laying down the detailed framework for the work of the Committee. The Committee also deeply acknowledges the valuable guidance of previous CGM-in-charge Shri.A.S.Ramasastri, (currently Director, IDRBT). The Committee also gratefully acknowledges the detailed guidance and key inputs received for enhancing the report from Shri.S.Ganesh Kumar, present CGM-in-charge, DIT,CO. The Convenor acknowledges the cooperation extended by the members of the Committee in completing the task entrusted to it. The Group wishes to thankfully acknowledge the Secretarial support provided by the Policy Division of DIT, Central Office consisting of Ms.Nikhila Koduri, GM(since transferred), Shri. Devesh Lal, GM and Ms. Jolly Roy, AGM for conduct of meetings of the Committee, preparing materials for discussion and compiling draft materials. The Committee gratefully acknowledges the contribution by Shri.N.Suganandh, DGM, DIT, CO for his detailed research assistance and dedicated efforts in compiling the report. Useful inputs from Shri.Purnendu Kumar, Asst. Adviser, DSIM on chapter on XBRL project is also acknowledged.

Executive Summary Reserve Bank has always emphasized the importance of both quality and timeliness of data to enable transforming the data into information that is useful for decision making purposes. To achieve this, uniform data standards are of vital importance. RBI issued the Approach paper on ADF in 2010 and laid down a detailed road map for its implementation by the banks. Banks initiated measures to implement the ADF. However, the progress in implementation varies across banks. Simultaneously, work is also underway to develop the XBRL schema for returns which enables standardization and rationalization of various returns with internationally accepted best practices of electronic transmission of data apart from work on Harmonization of Banking Statistics. This Committee has been set up to bring about synergy and uniformity of efforts being undertaken in the area of data reporting and data standardization. In the context of terms of reference to the Committee, the examination of any given stream of thought on data standardization would necessitate addressing a canvas of issues relevant to our context so as to fully address its different dimensions. Accordingly, the following aspects were examined and covered by the Committee: - Discussion of basic conceptual perspectives and various data/information standards in financial sector

- Data quality, data gap issues – international developments

- Issues in data management and data quality in banks

- Need for Data governance framework in banks

- Data standardization and reporting – commercial banks, NBFCs, UCBs

- ADF Project – Status, Issues and way forward

- XBRL project – status and way forward

- Automating data flow from banks to RBI

- Data Assurance process at RBI

- Data standardization - System-wide Perspective

- New developments and future perspectives

Summary of recommendations are furnished below: (I) Data standards 1) Regulatory data reporting standards - The data exchange standards need to be based on open standards and allow for standardization of data elements and minimizing data duplication/redundancy. RBI had already embarked on XBRL(which is based on XML platform) as the standard platform for a set of regulatory returns which may be continued for rest of returns or data elements. Thus, XBRL may be the reporting standard for regulatory reporting from banks to RBI. 2) The well-known Statistical Data and Metadata eXchange (SDMX) is recommended as a standard for exchanging statistical data. 3) In regard to standardisation of coding structures, in accordance with international standards, the ISO 4217 currency codes and ISO 3166 country codes can be used. 4) System of National Accounts (SNA) 2008 can be used for classification of institutional categories. 5) International Standard Industrial Classification (ISIC)/ National Industrial Classification (NIC) codes for economic activity being financed by a loan may be incorporated. 6) The ISO 20022 standard is recommended to be the messaging standards for the critical payment systems. RTGS in India already uses the ISO 20022 message formats. 7) In order to acquire single view of transactions in respect of a customer, banks are required to allot unique customer id. Given that no single identifier can represent all categories of customers of banks, the differentiation may need to be made by mapping with the identifiers presently available. Recently, Clearing Corporation of India Limited (CCIL) has been selected to act as a Local Operating Unit in India, for issuing globally compatible unique identity codes (named as legal entity identifier or LEI) to entities which are parties to a financial transaction in India. Given the LEI initiative, efforts to facilitate LEI for legal entities involved in financial transactions across financial system needs to be expedited to maximise coverage over the medium term. 8) Given the complexity of some of the corporate entities with numerous subsidiaries including step down subsidiaries, there is a need for usage of LEI or similar methodology to link the complex hierarchy of any corporate to facilitate ease of identification of total credit exposure of corporate groups. While it is reported that LEI application of CCIL has provision for the same, the utility may need to effectively leveraged to map the corporate group hierarchy. 9) While presently LEI architecture caters to legal entities involved in financial transactions, ultimately LEI or similar system needs to be made broad-based to incorporate other categories of customers like partnership firms and individuals. 10) For conduct of electronic transactions and reporting purposes in financial markets, well known international standards like ISO based standards can be considered where possible. 11) In order to take up data element/return standardisation through standardising or harmonising definitions, efforts of earlier working groups(Committee on rationalisation of returns and Committee on harmonisation of banking statistics) can be consolidated by setting up an inter-departmental project group within RBI which can work in a project mode so as to ensure comprehensive and effective implementation of standardisation and consistency of data element definitions across complete universe of returns/data requirements of RBI. II. Key components of Data governance architecture in banks and related aspects -

Committee recommends that key components of data governance architecture in banks may incorporate following aspects: -

Formulation of Data governance or information management policy with emphasis on various aspects like data governance organisational structure, data ownership, definition of roles and responsibilities, implementation of data governance processes and procedures at individual functions/departments, development of a common understanding of data, data quality management, data dissemination policy and management of data governance through metrics and measurements. -

Overall oversight of data governance may be with the Audit Committee of Board (ACB) of a bank or a specific Committee nominated by the Board. -

Formation of executive level Data Governance Committee or entrusting responsibility to existing information management committee if already existing. Data governance responsibilities and accountabilities should be clear, measured and managed to ensure sustained benefit to the bank. -

Data Ownership related aspects to be considered include overall responsibility for data, assigning ownership of key data elements to data controllers or stewards, assigning data element quality within business areas, implementing data quality monitoring and controls, providing data quality update to management/data governance committee and providing data quality feedback to business data owners. -

The data governance organization defines the basis on which the ownerships of data and information will be segregated across the bank. While there can be numerous models for the same, the three typical models are – Process Based, Subject Areas Based and Region Based. Data ownership needs to be primarily based on the business function. -

Platforms and data warehouse/s need to employ common taxonomies/data definitions/meta data. The metadata ownership may be clearly defined across the bank for various metadata categories. The owners need to ensure that the metadata is complete, current and correct. Capture the metadata from the individual source applications based on the metadata model for the individual source applications. The captured metadata need to be linked across the applications using pre-defined rules. The rules to be applied for synchronization of metadata also need to be defined. -

The metadata (data definitions) may be synchronized across various source systems and also with the RBI definitions for regulatory reporting. -

To help drive data governance success, measurements and metrics may be put in place which define and structure data quality expectations across the bank for which various data governance metrics and measures would be required. Data needs to be monitored, measured, and reported across various stages: data acquisition, data integration, data presentation and data models, dictionaries, reference data and metadata repositories. -

Internal audit function to provide for periodic reviews of data governance processes and functions and report on the issues to ACB. -

Detecting and correcting the faulty data manually is very tedious and time consuming. It is in this context the validation methods based on statistics, machine learning and pattern recognition gain importance. Many DBMS, DWDM product vendors now offer Data Profiling, Data Quality, Master Data Management services. Banks can take advantage of all these tools and techniques to keep their data clean. -

Various focussed data quality assessment and improvement projects need to be undertaken. -

Banks can also endeavour to establish a centralised analytics team as a centre of excellence in pattern recognition technology and artificial intelligence (AI) to provide cutting edge analysis and database tools or information management tools to support business decisions. -

Apart from providing enhanced focus during AFI/RBS, data governance mechanisms in banks may also be examined intensively through focussed thematic reviews by DBS of RBI. Based on outcome of thematic reviews, detailed guidance may be issued to banks to address issues identified during review. -

Banks, in particular domestic SIBs, may also be advised to keep in context BIS document “Principles for effective risk data aggregation and risk reporting” as part of their information management process. -

Guidance on Best practices on data governance and information management can be formulated by IDRBT. -

RBI may facilitate creation of Data Governance Forum under the aegis of IBA or learning institutions like CAFRAL or NIBM with other stakeholders like IDRBT, RBI, IBA, banking industry technology consortiums and banks, to assist in development of common taxonomies/data definitions/meta data for banking system. -

Bank Technology Consortiums under the aegis of IDRBT and other stakeholders like banks can validate critical banking applications like CBS and provide guidance on expected minimum key information requirements/validation rules and address to the extent possible different customizations across banks. While specifying key regulations, RBI may also endeavour to specify any key system related validation parameters and details of data quality dimensions expected from concerned regulated entities. -

Committee also prepared an illustrative list of data aspects pertaining to credit function that would need to be addressed by commercial banks to facilitate data standardization, data comparability and data reliability across banks. III. Data standardization in regulatory reporting–Commercial banks, UCBs, NBFCs 1) XBRL platform may be gradually expanded across the full set of regulatory returns. 2) Robust internal governance structure needs to be set up in regulatory entities with clear responsibilities and accountabilities to ensure correct, complete, automated and timely submission of regulatory/supervisory returns. 3) Regulatory reporting - Commercial Banks: -

Adoption of uniform codes among different returns of RBI will reduce inconsistency among returns. For eg. DSIM of RBI collects industry classification of credit as per NIC codes while other departments use different classification. Each bank has adopted its own approach to map NIC codes. Analysis of mappings of some banks showed apparent divergences. Hence, as part of data standardisation efforts, data collected by other returns may also be brought in alignment with the usage of common or standardised codes incorporated in BSR. -

The BSR codes need to be updated based on latest NIC 2008 classification. The BSR codes may be reviewed periodically and updated. Further, it should be possible to establish one-to-one mapping of sector/ industry codes in various other regulatory returns from the same. -

The nature of returns are generally dimensional in nature, consisting of various components like measures, concepts, elements, attributes, dimensions and distributions. A suitable data model may be generated to facilitate element-based, simplified and standardised data collection process by RBI under a generic model structure that is suitable for both primary and secondary data. -

There is a need to ultimately move over to “data” centric approach from the current “form” centric approach. Under a data centric approach methodology, any data point must be expressed by its “primary element” and all additional dimensions necessary to their identification. As “form” centric approach is oriented to the visualization of the data in certain format, it may be used for reviewing purpose only. Thus, from a medium term perspective, moving from return based approach to data element based approach needs to be considered -

The values of various attributes and dimensions should be standardised to enable the collation of data from different domains. -

Suitable data sets with varied nature like hierarchical, distributional or dimensional can be created to facilitate submission of data in summarised or granular form as the case may be from the central repository of the banks. -

A good feedback mechanism from banks to RBI and vice versa can help maintain uniqueness in data definitions. As and when multiple data definitions from XBRL taxonomy given by RBI map to same data element in any bank, they need to be flagged to RBI. -

Phased implementation of various standardised data definitions can be commenced based on elements which were already standardised. 4) NBFCs and UCBs: -

Rationalisation of returns needs to be attempted for NBFCs and UCBs. An exercise carried out earlier indicated significant duplication in the information provided through various returns. The Committee recommends that these returns may be rationalised by identifying major data elements and removing duplicate data elements. -

The Committee recommends an online data collection mechanism for larger NBFCs and Tier II UCBs. -

In due course, after rationalisation exercise, data element based return submission through XBRL may also be initiated. -

Suitable data model and robust meta data system may be developed. IV. ADF implementation by banks 1) Use of ADF for Internal MIS - The RBI Approach Paper highlighted usage of ADF platform for generating internal MIS as one of the key benefits of ADF. In this regard, banks may explore using the platform for generating internal MIS and other uses. Indicatively some aspects include : - NPA Management Automation Module

- utomation of SLBC Returns

2) Detailed survey can be carried out by RBI to ascertain the status of ADF implementation by banks. Feedback may also be obtained from DBS regarding any issues relating to ADF implementation obtained during AFI/RBS examination process. Any manual intervention from source systems to ADF central repository needs to be ascertained. Independent assurance on the ADF central repository mechanism in individual banks may also be verified. This would enable assessment of the quality and comprehensiveness of ADF implementation by individual banks. Any specific issues may be taken up with concerned banks for remediation. 3) Banks may take steps to enable the ADF Platform to cater to the Risk Based Supervision(RBS) data requirements by suitably mapping the RBS data point requirements. Thus, the ADF structure should be made use of and aligned to the RBS set-up so that synergies can be built-in, data quality and consistency can be enabled and the overall system can be made more efficient. 4) Existing ADF platform needs to be leveraged by prescribing the necessary granular data fields to be captured by banks to achieve consistency and uniformity in regulatory reporting. 5) Banks may also port the necessary details required by RBI as indicated in Guidelines on “Framework for Revitalizing Distressed Assets in the Economy - Guidelines on Joint Lenders' Forum (JLF) and Corrective Action Plan (CAP)” in February, 2014 under ADF central repository platform. 6) Depending on the requirement of RBI regarding granularity of data, ADF system needs to be suitably updated to provide for the requisite granular data fields at the central repository level. The ADF system of the banks should be designed flexibly to accommodate any anticipated changes in the format of return, i.e., addition and deletion of data elements. V. XBRL Project of RBI 1) Similar forms can be taken together within/ across the departments of RBI and thus common reporting elements can be arrived at. Rationalisation /Consolidation of returns before taking up the returns pertaining to a department must be done. The rationalisation / consolidation of returns may be examined and reviewed on a periodic basis. 2) For granular account level data and transactional multi-dimensional data, RBI may develop and provide specific details of RDBMS/text file structures along with standardised code lists and basic validation rules so that banks can run the validation logics to ascertain that the datasets are submission-ready. In this connection, XBRL based data element submission may also be explored. 3) It is expected that banks would generate the instance document from the Centralised Data Repositories (CDR) and submit the same to RBI without manual intervention. The banks should validate the generated instance documents based on the XBRL taxonomy and validation rules before sending them to the Reserve Bank. Thus, the present approach of spreadsheet(Excel) based submission of XBRL returns needs to be given up ultimately. 4) An Inter-Departmental Data Governance Group (DGG) for the RBI as a whole may be formed, so that the process of rationalization regarding data elements, periodicity, need for provisional returns can be carried out in a concerted manner. All future returns to be prescribed by any department may be routed through the DGG, to avoid duplication. 5) As part of its data governance activities, the DGG may also pro-actively identify any data gaps in the evolving milieu and prepare plan of action to address the gap. 6) The XBRL taxonomy must include data definitions so as to completely leverage the utility offered by XBRL. 7) The XBRL taxonomy should be designed flexibly so as to take care of the anticipated changes in the format of return, i.e., addition and deletion of data elements. 8) The XBRL based submission by financial companies to MCA should be shared across the regulators as required. 9) Since new tools/software are developed for leveraging XBRL, there needs to be process of continuous monitoring of new developments so as to examine their utility and possible value addition. 10) Ultimately, the logical location for storage of XBRL data is a Data Warehouse. Therefore the existing Data Ware House needs to be revamped with Next Generation Data Ware House capabilities. VI. Recommendations on Automating data flow from banks to RBI 1) Using secure network connections between the RBI server and the bank’s ADF server, the contents of the dataset can be either pulled through ETL mode or pushed through SFTP mode and loaded onto the RBI server automatically as per the periodicity without any manual intervention. Pushing of data by banks could enable easier management of the process at RBI end. An acknowledgement or the result of the loading process can be automatically communicated to the bank’s ADF team for action, if necessary. 2) The validation schemes may also be expressed in XBRL/XML form so that the systems at banks automatically understand the requirement, accordingly process their data and return the data to RBI, without any manual intervention. This would enable a fully automated data flow from banks to RBI even with dynamic and changing validation criteria. 3) While the traditional RDBMS infrastructure in place in RBI may be used for storage and retrieval of aggregated and finalized data, Big-data solutions may also be considered for micro and transactional datasets given their high volume, velocity and multi-dimensional nature. Big Data solutions also help enhance analytical capability in the new data paradigm particularly in the area of banking supervision. 4) The enterprise-wide data warehouse (EDW) of RBI should be made the single repository for regulatory/supervisory data pertaining to all regulated entities of RBI with appropriate access rights. Any unstructured components pertaining to RBS data may be maintained in EDW using new tools available for such items. 5) As a key support for risk based supervision for commercial banks, internal RBI MIS solution needs to seamlessly generate two important sets of collated information: (i) Risk Profile of banks (risk-related data – mostly new data elements), and (ii) Bank Profile (mostly financial data – DSB Returns and additional granular data) based on data supplied by banks. 6) Once the system stabilises, the periodicity of data can be reviewed so as to obtain any particular set of data at shorter intervals or even up to near real time. VII. Recommendations on data quality assurance process 1) Exclusive data quality assurance function can be created under the information management unit of RBI. 2) A data quality assurance framework may be formulated by RBI detailing the key data quality dimensions and systematic processes to be followed. The various key dimensions include relevance, accuracy, timeliness, accessibility and clarity, comparability and coherence. The framework may also be periodically reviewed. 3) Various validation checks like sequence check, range check, limit check, existence check, duplicate check, completeness check, logical relationship check, plausibility checks, outlier checks are among the key checks which need to be considered and documented for various datasets with assistance from domain specialists. 4) Usage of common systems for data collection, storage and compilation would help provide environment for robust implementation of systematic data quality assurance procedures. 5) Deployment of professional data quality tools as part of the data warehouse infrastructure could also provide for comprehensive assessment of data quality dimensions. 6) Whenever data are received and compiled, quality assessment reports that summarize the results of various quality checks may also be generated internally. VIII. System-wide Improvements: 1) Given that standards are considered a classic public good, with costs borne by a few and benefits accruing over time for many entities, active involvement of regulators and Government in now internationally acknowledged as key towards solving the collective action problems created by these disincentives. Inter-regulatory forums could help facilitate improvements in data/information management standards across the financial sector to benefit all stakeholders and furthering collaboration with international stakeholders. 2) A separate standing unit Financial Data Standards and Research Group may be considered with involvement of various stakeholders like RBI, IBA, banks, ICAI, IDRBT, SEBI, MCA, NIBM, CAFRAL etc for looking at the financial data elements/standards and to try to bring them into holistic data models apart from mapping with applicable international standards. 3) Regulators like RBI, SEBI, MCA are in the process of undertaking various XBRL projects. Given the benefits offered by XBRL and its usage across the globe by regulatory bodies, all the regulators may explore possibilities of commonalities in taxonomy and data elements to the extent possible. Protocols and formats may be formulated for sharing of the data among themselves. 4) In regard to OTC derivatives, one of the issues being debated is data portability and aggregation among the trade repositories spanning countries and jurisdictions. Hence, it is important to be cognizant of the needs of uniformity of standards across the globe and the need for our repository framework to have sufficient flexibility to conform to international standards and best practices as they evolve depending upon their relevance in the Indian context. 5) Ultimately, from a banking system perspective full benefit would arise by enabling transactional and accounting systems in banks to directly tag and output data in formats like XBRL to maximize efficiency and benefit. Thus, there is need for integration of standard formats like XBRL in internal applications/accounting systems of banks. The present scope of XBRL data definitions have to be further extended to cover in depth data definitions covering almost all data elements that are required to carry banking business. 6) In respect of knowledge sharing and research, various measures recommended include (i) Research by IDRBT regarding ways and means of leveraging new data technological platforms like XBRL for enhancing overall efficiencies of banking system (ii) conducting of pilot for enhancing leveraging of technologies like XBRL for internal uses by banks. 7) Standard Business Reporting, which involves leveraging technologies like XBRL by Government for larger benefits beyond the field of regulatory reporting, is being implemented in various countries like Australia and Netherlands. The same may be explored in India by Government of India in a phased manner. 8) As the leveraging of machine readable tagged data reporting increases, the audit and assurance paradigm also need to get re-engineered to carry out an electronic audit and electronic stamp of certification using digital signatures. 9) Committee recognizes that coordinated efforts are being carried out by various organizations which have developed standards like FIX, FpML, XBRL, ISD etc for laying the groundwork for defining a common underlying financial model based on ISO 20022 standard. Costs of migration and inter-operability would be key factors going forward. 10) As had been indicated in Chapter II, comparability of financial data across countries is a key challenge faced globally. Increasing adoption of IFRS across countries is a positive development. While there is large number of convergence in capital standards via Basel II and Basel II, there are variations in details and level of implementations across countries. While the G-20 Data Gap initiative is a work in progress, there is also need for international stakeholders to analyse and examine how technologies like XBRL can help facilitate ease of comparability of data as also to identify differences between countries in respect of financial/regulatory measures and reporting rules in an automated manner. 11) Financial instrument reference database could be explored with focus on key components relating to ontology, identifiers and metadata and valuation and analytical tools, akin to such initiatives in US. 12) A single Data Point Model or methodology at international level can be explored for the elaboration and documentation of XBRL taxonomies 13) GoI has plans to establish Financial Data Management Centre(FDMC) as a repository of all financial regulatory data.Large investments already made by the individual regulators also needs to be factored in. 14) There is also need to incorporate training and education on the new technologies like XBRL by various academic bodies as also training/learning institutions so as to help in capacity building and to improve the availability of trained resources. IX. Future trend and developments 1) Committee recommends that research/assessment of new developments in technology and financial data/technology standards need to be made a formal and integral part of the information system governance of banks and the regulator. 2) Banking technology research institute IDRBT may carry out research on new technologies/development and serve as a think tank in this regard. 3) Banks may explore Big Data solutions for leveraging various benefits of the new paradigm concerned with volume and velocity of data. 4) Any financial technical data standards needs to be of the nature of open standards, inter-operable and scalable in nature. Due impact assessment and pilot run would also be necessary before implementing on larger scale. X. Implementation of key recommendations 1) The suggested timeframe for implementing the recommendations of the Committee is indicated at Annex VII.

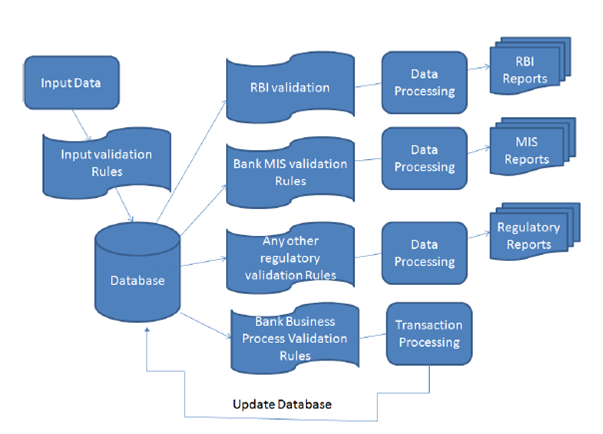

Introduction Committee on Data Standardization THE GENESIS The IT Vision 2011-17 document of the Reserve Bank had emphasized the importance of both quality and timeliness of data for its processing into useful information for MIS and decision making purposes. To achieve this, uniform data reporting standards are of vital importance. Banks are in various stages of implementation of the Automated Data Flow (ADF), a project initiated by the Reserve Bank to ensure smooth and timely flow of quality data from the banks to the Reserve Bank. Simultaneously, work is also underway to develop the XBRL schema for regulatory returns which enables standardization and rationalization of various returns with internationally accepted best practices of electronic transmission of data as also on Harmonization of Banking Statistics. It is in this context that RBI had approved the formation of a Committee for Data Standardization which inter-alia will bring about synergy and uniformity in the efforts being undertaken in the areas of data reporting and data standardization. DIT CO had initiated the Automated Data Flow (ADF) project in August 2010 and as enumerated in ADF Approach Paper, it was envisaged that under the project data from various source systems of banks would flow to a centralised MIS server within the bank which in turn would be used to furnish regulatory reports and returns to RBI in an automated manner without any manual intervention. The banks were at varied stages of implementing the project. A comprehensive Status Note was put up to top management. Based on the directions of top management, various distinct efforts were initiated for addressing issues around quality of regulatory data reported by the banks to RBI. The main brief of DIT Committee on Data Standardisation is, inter alia, to bring about a synergy and uniformity into data reporting and data standardisation process. The committee has representatives from various departments of the Bank such as DSIM, DIT, DBS, and a large Regional Office in addition to experts from IT firm Infosys, chief of returns Governance Group of three scheduled commercial banks (SBI, ICICI, and HSBC), IDRBT with Shri P. Parthasarathi, CGM, DIT as convener of the committee. The members of the Committee are given below: | Department/Office/Institute | Name | | From RBI | | 1 | DIT (Convenor) | Shri P Parthasarathi, CGM | | 2 | DSIM | Dr. A R Joshi, Adviser | | 3 | Chennai RO( Presently In Mumbai, RO) | Shri G P Borah, CGM | | 4 | DBS,CO | Shri Aloke Chatterjee, GM | | 5 | DIT | Shri Devesh Lal, GM* | | Other Organizations | | 6 | IDBRT | Dr. A S Ramasastri | | 7 | IDRBT | Dr. V Radha | | 8 | SBI | Shri Kajal Ghose | | 9 | HSBC | Shri A Narayanan | | 10 | ICICI | Shri Sanjay Singhvi | | 11 | Infosys | Shri C N Raghupathi | | *Shri.Devesh Lal replaced Ms.Nikhila Koduri, GM subsequent to her transfer. | The terms of reference of the committee are as follows: - to study the Quality Assurance Procedures and function relating to data in few other central banks and analyse it’s possible emulation at the RBI.

- to study the existing reporting system and its usage in various departments in the Bank.

- to peruse the data standards implemented by XBRL and data warehouse and

- to recommend on uniform data standards for banking system

- any other related issues

The approach of the Committee was to form sub-groups for focussed study of subject areas under remit of the Committee. The secretarial assistance for the committee was provided by DIT, CO.

Chapter I

Data Standardization -Setting the Context - Perspectives

and overview on standardization 1.1 Introduction A fundamental issue for enabling effective decision making is obtaining consistent, reliable and robust data. In the field of banking too, data is a key component and affects or impacts the information and knowledge gleaned from the same. The major developments in finance and the speed with which it has occurred, have been built upon standardized methods to exchange data. This chapter sets the context and leads to further unraveling of the various dimensions of data standardization and related issues in subsequent chapters of the report. This chapter indicates in general the importance of standardization and then delves into the world of IT standards by giving an overview of standardization in the IT sector, brief history of IT standards and importance of data standards. 1.2 Concept of Standards The term “standard” has various definitions. While various dictionaries offer multiple definitions, a commonly offered definition is one offered by the International Organization for Standardization (ISO). The website of ISO defines a standard as a document that provides requirements, specifications, guidelines or characteristics that can be used consistently to ensure that materials, products, processes and services are fit for their purpose. 1.3 Benefits of standardization As per ISO, standards help bring technological, economic and societal benefits. They help to harmonize technical specifications of products and services making industry more efficient and breaking down barriers to international trade. ISO has documented several research and case studies espousing the various empirical benefits of standards. The value of standards to business and indeed the economy at large comes from the effective and efficient adoption, uptake of a standard across its target population. Thus, the effective adoption and diffusion of a standard is vital to the standardization process for economic benefits to be realized. 1.4 Classification of standards There could be either horizontal or vertical standards. Every industry has standards that are specific to the business domains they deal with. Finance, Manufacturing, Medical etc. are examples of industry sectors that maintain their own standards. Thus, each of these areas creates vertical standards. These vertical standards often overlap in areas of applicability, particularly in respect of data representation and information technology, resulting in standards that have a similar purpose but are different in terms of implementation and the vocabulary or terminology used to specify the standard. Information technology standards are generally applicable across multiple industry sectors. Because of their general applicability, these standards are often referred to as horizontal standards. Given the rapid developments in information technology arena, many of the key horizontal technology standards are falling within the broad category of Information and Communication Technology (ICT). In recent years there has been a push to implement industry-specific vertical standards using existing horizontal technology standards, for example XML-based vertical standards such as XBRL. In this case, the horizontal syntax standard XML is used to create specific vertical applications for financial/accounting data. 1.5 Information Technology standards Individuals, businesses and governments throughout the world use Information Technology (IT) extensively. In order to facilitate this extensive use of IT, systems need to be interconnected and work across applications, organizations and geographic locations. This has resulted in a dramatic jump in network connections, a proliferation of computing devices and varied uses of IT based applications. These interactions highlight the critical need for a comprehensive and consistent set of standards within the IT sector. Standards activities in the IT sector were said to have begun in the 1960s. Early standardization efforts were for certain programming languages and protocols for moving information around. Further, the early standards were reported to have been mainly focused on the syntax rather than describing content in terms of the nature of the information being standardized. Subsequently, computer readable forms of syntactic specifications have emerged like XML (eXtensible Markup Language). The interoperability and data exchange across different vendors, platforms, applications and software is dependent upon standardized interfaces, protocols, services and formats. Therefore, standards in information technology can help the portability and compatibility of systems and hence enable ease of exchanging information between systems. 1.6 Data standards The data standards help facilitate the exchange of data between systems, aggregation of data from multiple source systems, comparison of data among unrelated systems and automation of processes for storing, reporting, and processing data. Data standards define the format, content, and syntax of data, providing a common language that enables precise identification of entities and instruments, the relationships among them, and the data to describe them. Data standards also can help enhance data quality by supporting consisting metadata. Standardized Definitions used across the public and private sectors improve the value of data for analysis. When key terms are not clearly defined, financial analysts are unable to accurately interpret and compare data, resulting in a lack of confidence in the results. Standards are particularly needed when data include a common term that can be understood in various ways. Standardized formats help analysts aggregate and compare data, and automate processes for storing, reporting, and processing data. It is important to consider how data may be used and to apply a standard format, even to routine information.1 1.7 Data standardization in Indian banking system The foresaid discussion provides a general overview of the concept of standards, its different dimensions and regarding data standards. In the context of terms of reference to the Committee, Committee opines that the examination of any given stream of thought on data standardization would necessitate addressing canvas of issues so as to fully address its different dimensions. Accordingly, the following aspects were examined and covered by the Committee: -

Discussion of basic conceptual perspectives and various current data/information standards in financial sector -

International developments relating to data quality, data gap and data standardisation issues -

Issues in data management and data quality in banks -

Need for Data governance framework in banks -

Data standardization and reporting – commercial banks, NBFCs, UCBs -

ADF Project – Status and Issues -

XBRL project – status and way forward -

Automating flow of regulatory data from banks to RBI -

Data quality assurance process at RBI -

Data standardization - System-wide Perspectives -

New developments and future perspectives The subsequent chapters elucidate the assessment of the Committee on these dimensions and the recommendations thereon.

Chapter II–Data gaps, data quality and data standardization–

International developments 2.1 Introduction Financial crisis had revealed various issues pertaining to data quality. In the aftermath of the same, various data quality issues were identified and specific initiatives to address the same are being carried out. This chapter details the various issues pertaining to data quality, data gaps and data standardization arising out of financial crisis and various steps currently being taken in this regard. The experience of the financial crisis led to a call by the Group of Twenty (G-20) Finance Ministers and Central Bank Governors for the IMF and the Financial Stability Forum (FSF), the predecessor of the FSB, “to explore gaps and provide appropriate proposals for strengthening data collection.” As indicated by BIS, “the emphasis in the 1990s and early 2000s among the international community for comparability, consistency and quality of data within and across countries remains relevant.” 2.2 Context The integration of economies and markets, as evidenced by the financial crisis spreading worldwide, highlights the critical importance of relevant statistics that are timely and internally consistent as well as comparable across countries. The international community has made a great deal of progress in recent years in developing a methodologically consistent economic and financial statistics system covering traditional datasets, and in developing and implementing data transparency initiatives. While within macroeconomic (real sector, external sector, monetary and financial, and government finance) statistics, the System of National Accounts (SNA) is considered the main organizing framework, for macro-prudential statistics, an analogous structure/framework is not yet in place, but there is on-going progress in developing a consensus among data users on key concepts and indicators, including in relation to the SNA.2 While it is generally accepted that the financial crisis was not the result of a lack of proper economic and financial statistics, it exposed a significant lack of information as well as data gaps on key financial sector vulnerabilities relevant for financial stability analysis. Some of these gaps affected the dynamics of the crisis, as markets and policy makers were caught unprepared by events in areas poorly covered by existing information sources, such as those arising from exposures taken through complex instruments and off-balance sheet entities, and from the cross-border linkages of financial institutions. Broadly, there is a need to address information gaps in three main areas that are inter-related - the build-up of risk in the financial sector, cross border financial linkages and vulnerability of domestic economies to shocks.3 Further, for efforts to improve data coverage and address gaps to be effective and efficient, requires action and cooperation from individual institutions, supervisors, industry groups, central banks, statistical agencies, and international institutions. Existing reporting frameworks should be used where possible. While data gaps may be an inevitable consequence of the ongoing development of markets and institutions, these gaps are highlighted, and significant costs incurred, when a lack of timely, accurate information hinders the ability of policy makers and market participants to develop effective policy responses. Consequently, staff of the IMF and the FSB Secretariat, in consultation with official users of economic and financial data in G-20 economies and key international organizations, identified 20 recommendations that need to be addressed. Some of these include: -

The need to strengthen the data essential for effectively capturing and monitoring the build-up of risk in the financial sector. This calls for the enhancement of data availability, both in identifying the build-up of risk in the banking sector and in improving coverage in those segments of the financial sector where the reporting of data is not well established, such as the nonbank financial corporations. -

The need to improve the data on international financial network connections. This calls for enhanced information on the financial linkages of global systemically important financial institutions (G-SIFIs), as well as the strengthening of data gathering initiatives on cross-border banking flows, investment positions, and exposures, in particular to identify activities of nonbank financial institutions. -

The need to strengthen the data needed to monitor the vulnerability of domestic economies to shocks. -

The need to promote the effective communication of official statistics to enhance awareness of the available data for policy purposes. These recommendations were endorsed by the G20 finance ministers and central bank governors at their meeting in Scotland in November 2009. 2.3 How should the data be organized and reported? Standardization of data reporting allows efficient aggregation of information for effective monitoring and analysis. It was therefore “important to promote the use of common reporting systems across countries, institutions, markets, and investors to enhance efficiency and transparency. Standardized reporting allows the assemblage of industry-wide data on counterparty credit risk or common exposures, thus making it possible for stakeholders to construct basic measures of common risks across firms and countries”.4 2.4 Who should have access to the data? It is also acknowledged that the enhanced data collection by regulatory and/or supervisory agencies must be accompanied by a process for making data available to key stakeholders as well as the public at large. This is consistent with the “public good” nature of data, while safeguarding the confidentiality concerns of both the home and host regulators and supervisors. Differences in accounting standards across countries were expected to be addressed through the legislative framework. 2.5 Legal Entity Identifier Introducing a single global system for uniquely identifying parties to financial transactions is expected to offer many benefits. There is widespread agreement among the global regulatory community and financial industry participants on the merits of establishing such a legal entity identifier (LEI) system. The system would provide a valuable ‘building block’ to contribute to and facilitate many financial stability objectives, including: improved risk management in regulated entities; better assessment of micro and macro prudential risks; facilitation of orderly resolution; addressing financial fraud; and enabling higher quality and accuracy of financial data overall. But despite numerous past attempts, the financial industry has not been successful in establishing a common global entity identifier and it is reported to be lagging behind many other industries in agreeing and introducing a common global approach to entity identification. The financial crisis has provided a renewed spur to the development of a global LEI system. International regulators have recognized the importance of the LEI as a key component of necessary improvements in financial data systems. The value of strong co-operation between private sector stakeholders and the global regulatory community is widely accepted in this context. The lack of a common, accurate and sufficiently comprehensive identification system for parties to financial transactions raises many problems. A single firm may be identified by different names or codes which an automated system may interpret as references to different firms. The ultimate aim is to put in place a system that could deliver unique identifiers to all legal entities participating in financial markets across the globe. Each entity would be registered and assigned a unique code that would be associated with a set of reference data (e.g. basic elements such as name and address, or more complex data such as corporate hierarchical relationships). Potential users, both regulators and industry, would be granted free and open access to the LEI and to shared reference information for any entity across the globe and could build this into their internal automated systems. A high quality LEI would thus offer substantial benefits to financial firms and market participants that currently spend large amounts of money on reconciling and validating counterparty information, as well as offering major gains to risk managers and the regulatory community in relation to the identification, aggregation and pooling of risk information.5 2.6 BIS – Principles for effective risk data aggregation and risk reporting The financial crisis revealed that many banks, including global systemically important banks (G-SIBs), were unable to aggregate risk exposures and identify concentrations fully, quickly and accurately. This meant that banks' ability to take risk decisions in a timely fashion was seriously impaired with wide-ranging consequences for the banks themselves and for the stability of the financial system as a whole. The Basel Committee issued “Principles for effective risk data aggregation” in 2014 which is expected to strengthen banks' risk data aggregation capabilities and internal risk reporting practices. Implementation of the principles will strengthen risk management at banks - in particular, G-SIBs - thereby enhancing their ability to cope with stress and crisis situations. The principles pertain to various aspects like governance, data architecture and IT infrastructure, accuracy and integrity, completeness, timeliness, adaptability, accuracy, comprehensiveness, clarity and usefulness, frequency and distribution. The Basel Committee and the Financial Stability Board (FSB) expect banks identified as global systemically important banks (G-SIBs) to comply with the Principles by 1 January 2016. In addition, the Basel Committee strongly suggests that national supervisors also apply the Principles to banks identified as domestic systemically important banks (D-SIBs) three years after their designate on as such by their national supervisors. A summary of principles and detailed requirements is indicated at Annex I. 2.7 Conclusion Recent years have seen significant progress in the availability and comparability of economic and financial data. However, the present crisis has thrown up new challenges that call for going beyond traditional statistical production approaches to obtain a set of timely and higher-frequency economic and financial indicators, and for enhanced cooperation among international agencies in addressing data needs. Organizational issues also need to be tackled, especially in developing common and standardized datasets on exposures of G-SIFIs. Thus, data standardization, data quality issues and data gaps have become key focus in the aftermath of financial crisis.

Chapter III- Data standards in banking/financial sector 3.1 Introduction: This chapter is concerned with various aspects relating to current data and information standards in the financial sector used globally. Standards make modern commerce possible. Data standards allow the exchange of data between systems; aggregation of data from multiple sources; comparison of data among unrelated systems; and automation of processes for storing, reporting, and processing data. This chapter provides context for usage of standards in finance and discusses on various types of standards and Committee’s recommendations are provided. 3.2 Context for standards in finance The exponential growth in computing power and the resulting proliferation of competing protocols and standards, led to a significant increase in the level of complexity required to assemble, maintain, and evolve business information systems. In 1987, John Zachman created the Zachman Framework, the first attempt at organizing the complete set of information required to manage and maintain systems planning for large organizations. The Technical Architecture Framework for Information Management (TAFIM) was first published in 1991,with an emphasis on non-proprietary, open systems architectures and subsequently emerged the Open Group Architecture Framework (TOGAF) system approach, which has significantly influenced government and defense-related Enterprise Architecture (EA) approaches. 3.3 Enterprise architecture framework MIT Centre for Information Systems Research defined EA as the specific aspects of a business that are under examination: EA is the organizing logic for business processes and IT infrastructure reflecting the integration and standardization requirements of the company’s operating model. The operating model is the desired state of business process integration and business process standardization for delivering goods and services to customers. The Enterprise Architecture body of knowledge defines EA as a practice, which “analyzes areas of common activity within or between organizations, where information and other resources are exchanged to guide future states from an integrated viewpoint of strategy, business and technology. The Zachman framework helps in terms of defining interoperability and standards for data. It provides for a structured way of viewing an enterprise. It consists of a two dimensional classification matrix based on the intersection of six communication questions (What, Where, When, Why, Who and How) with five levels of reification, intended to transforming the most abstract ideas into more concrete ideas at the operations level. 3.4 Industry consortiums One of the fundamental requirements for information and communication technology is interconnection and interoperability. The need to develop standard interfaces and communication protocols behind which multiple companies could compete in terms of service offering spawned an entire industry of standards organizations, referred to as consortiums. There are estimated to be roughly 500 industry standards consortiums. Consortiums are often specific purpose with a specific time horizon, while some continue on and have quite a long time horizon. 3.5 The Internet standards organizations The collection of standards referred to as the Internet standards are managed by a group of related organizations like Internet Engineering Task Force (IETF), Internet Society (ISOC), Internet Architecture Board (IAB), Internet Corporation for Assigned Numbers and Names (ICANN). 3.6 International Standards - International Organization for Standardization (ISO) The International Organization for Standardization (ISO), located in Geneva, Switzerland is the premier standards body responsible for the development and management of international standards. The standards work is performed within ISO’s Technical Committees, their subcommittees and working groups. The listing of various ISO standards for banking/finance is indicated at Annex II. 3.7 Horizontal technology standard consortiums Three organizations were formed to provide horizontal information technology solutions in response to technical innovations. While the World Wide Web Consortium(W3C) has mainly stayed to its core mission of supporting XML horizontal technologies, both the Organization for the Advancement of Structured Information Standards(OASIS) and the Object Management Group (OMG) have evolved since their origin. World Wide Web Consortium (W3C) - The W3C was created to support open standards for the World Wide Web in 1994 by Tim Berners-Lee, the inventor of the World Wide Web. The major contributions from the W3C include: HTML (HyperText Markup Language); The Extensible Markup Language (XML) in 1996; the Semantic Web in2001; and Web Services in 2002. Many of the financial data-interchange formats used widely like ISO 20022, XBRL, FpML, FIXML are based upon XML. The Object Management Group (OMG)-The Object Management Group (OMG) was created in 1989 as part of the open systems movement. The mission of the OMG has evolved along with the industry. While initially it was responsible for creating a heterogeneous distributed object standard, some of OMG’s latest initiatives are focused on the financial services industry and semantics. The Financial Domain Task Force (FDTF) is a partnership between the OMG and the EDM Council. 3.8 Standards for Identification There is also considerable variations around business practices by firms providing both standard and proprietary identifiers. A few standard financial identifiers are indicated below: Currency codes- The Codes for Representation of Currencies and Funds (ISO 4217) defines the three character currency codes that are used to identify currencies. Country codes- The Codes for the Representation of Names of Countries and their Subdivisions(ISO 3166) standard provides standard codes for countries. Market identifier codes- The Codes for Exchanges and Market Identification (MIC) (ISO 10383) standard provides standard codes for markets and venues. Identification of financial instruments -The ISIN is a standard beneath ISO TC68.The standard is administered by the Association of National Numbering Agencies. The ISIN was created in response to the globalization of markets where firms traded and held positions in securities across countries. The ISIN provides a country prefix in preceding the existing national identifiers. Legal entities and parties- A new Legal Entity Identifier (LEI) standard has been approved as an ISO standard. 3.9 Standards for code lists Between the static structure and dynamic data are data items that change at a slower rate. These data items are at the same time data, but also have a structural role. Genericode- Genericode is an XML based standard that provides a structure for managing code lists. SDMX- Statistical Data and Metadata eXchange (SDMX) is a standard for exchanging statistical data. While broadly suited for most types of statistics, it was initially developed by central bankers and has been largely used for economic statistics. The original sponsoring institutions were the Bank for International Settlements, the European Central Bank, Eurostat, the International Monetary Fund (IMF), the Organization for Economic Co-operation and Development(OECD), the United Nations Statistics Division, and the World Bank. With the release of v2.1 in 2011, the information model of SDMX can be viewed as becoming a horizontal technology for managing statistical time series of any type. XBRL-The use of the XBRL terminology for managing code lists has also been explored as an alternative standard. 3.10 Financial technology standards Data technology standards support how information is collected, aggregated and transported. An example of a standard is the extensible Business Reporting Language (XBRL), which defines methods to allow machine readable data on financial statements that can then be transported, exchanged, and stored in a consistent manner. Standards exist both for the basic data element level and as lists and messages to represent financial instruments and financial transactions. Standards also exist for the syntaxes or physical representation of the elements. Within the financial services industry, there are multiple messaging standards being used, and internationally the Standards Coordination Group has come up with an approach that leverages and includes these standards into a broader framework without reinventing and creating redundant messages that increase implementation costs and create uncertainty or confusion for the industry. The investment roadmap issued in September, 2010 indicates commitment of each concerned organization (FIX, FpML, SWIFT, XBRL, ISITC and FISD) to the ISO 20022 business model by laying the groundwork for defining a common underlying financial model and ensuring some level of interoperability by producing a consistent direction for utilization of messaging standards and communicating that direction clearly to the industry. The respective business processes included in the roadmap are or will be incorporated within the ISO 20022 business model and the model allows for ISO 20022 XML based messages to be created to support the business processes, while at the same time provides in certain circumstances for existing domain specific syntaxes and protocols to be maintained in order to protect the investments of market participants. The organizations have reported that they are committed to meeting on a consistent basis to ensure the roadmap continues to accurately depict the standards environment. ISO 20022 - ISO 20022 is a methodology used by the financial industry to create message standards for a few functions. Its business modelling approach allows users and developers to represent financial business processes and underlying transactions in a formal but syntax-independent notation. As the scope of this standard covers the global financial services industry, this allows coverage across various business areas (securities, payments, foreign exchange, for example), and across asset classes. The ISO 20022 method starts with the modeling of a particular business process along with details of relationships and interactions between the actors, and the information that they must share to execute the process also are identified. The output is subsequently organized into a formal business model using UML (Unified Modeling language).The formal business model of the process and the business information needed to support this particular process are organized into “business components” and placed into a repository. ISO 20022 describes a Metadata Repository containing descriptions of messages and business processes, and a maintenance process for the Repository Content. The Repository contains a large amount of financial services metadata that has been shared and being standardized across the industry. The Financial Information Exchange Protocol (FIX) -The FIX Protocol was created beginning in 1992. It is reported to be a standard of the securities front office. Many instructions relating to interest, trade instructions, executions etc., can be sent using the FIX protocol. FIX supports equities, fixed income, options, futures, and FX. In 2003, FIXML was optimized to greatly reduce message size to meet the requirements for listed derivatives clearing. FIXML is reported to be widely used for reporting of derivatives positions and trades in the USA. The eXtensible Business Reporting Language (XBRL) - XBRL, eXtensible Business Reporting Language, is an XML based data technology standard that makes it possible to “tag” business information to make it machine readable. As the business information is made machine readable through the tagging process, this information does not need to be manually entered again and can be transmitted and processed by computers and software applications. The use of XBRL has expanded into the financial transaction processing area also in recent years. The Financial Product Markup Language (FpML) -The Financial Products Markup Language was created in response to the increased use and rapid innovation in over-the-counter financial derivatives. It uses the XML syntax and was specifically developed to describe the often complicated contracts that form the base of financial derivative products. It is widely used between broker-dealers and other securities industry players to exchange information on Swaps, CDOs, etc. ISO 20022 and XBRL- In June 2009, SWIFT, DTCC and XBRL US commenced an initiative to develop a corporate actions taxonomy using XBRL. Each of the elements in the corporate actions taxonomy corresponds to a message element in the ISO 20022 Corporate Actions Notification message. This initiative attempts to bring together a XBRL standard with the standard used by financial intermediaries to announce and process corporate actions events. ISO 8583 – It is used for almost all credit and debit card transactions, including ATMs. This type of messages are exchanged daily between issuing and acquiring banks. 3.11 Reference data standards The 2008 financial crisis brought to fore the fact that this is one of the most neglected areas in the financial services industry. In respect of investment area, reference data define the financial instruments that are used in the financial markets. 1. Market Data Definition Language (MDDL)- MDDL was created to provide for a comprehensive reference data model as an XML based interchange format and data dictionary for financial instruments, corporate events, and market related, economic, and industrial indicators, which started in 2001 by the Software and Information Industry Association’s Financial Information Services Division. The direct adoption of MDDL is very limited though it is reported that MDDL’s value as a reference model continues. 2. Open MDDB - FIX Protocol Ltd. and FISD jointly developed a relational database model for reference data derived from MDDL in 2009. It also provides support for maintenance and distribution of reference data using FIX messages. The EDM Council, FISD, and FIX had entered into an agreement for EDM Council to facilitate and support evolution of the Open MDDB by the community. 3.12 Standards for representing business model 1. ISO 20022 As a result of the integration of ISO 19312 into ISO 20022, the financial messaging standard was expanded to be a business model of reference data for the financial markets in addition to the messages. 2. Financial Information Business Ontology (FIBO) The EDM Council and the OMG created a joint working group, the Financial Data Task Force, “to accelerate the development of ‘sustainable, data standards and model driven’ approach to regulatory compliance. “The initiative focused on semantics instead of traditional modeling techniques. 3.13 Recommendations on data standards 1) Regulatory data reporting standards - The data exchange standards need to be based on open standards and allow for standardization of data elements and minimizing data duplication/redundancy. RBI had already embarked on XBRL(which is based on XML platform) as the standard platform for a set of regulatory returns which may be continued for rest of returns or data elements. Thus, XBRL may be the reporting standard for all regulatory reporting of structured data. 2) The ISO 20022 standard is recommended to be the messaging standards for the critical payment systems. RTGS in India already uses the ISO 20022 message formats. 3) The well known Statistical Data and Metadata eXchange (SDMX) is recommended as a standard for exchanging statistical data. 4) In regard to standardisation of coding structures, in accordance with international standards, the ISO 4217 currency codes and ISO 3166 country codes can be used. 5) System of National Accounts (SNA) 2008 can be used for classification of institutional categories and International Standard Industrial Classification (ISIC)/ National Industrial Classification (NIC) codes for economic activity being financed by a loan may be incorporated. 6) In order to acquire single view of transactions in respect of a customer, unique customer id is allotted by individual banks. Given that no single identifier can represent all categories of customers of banks, the differentiation may need to be made by mapping with the identifiers presently available. Recently, Clearing Corporation of India Limited (CCIL) has been selected to act as a Local Operating Unit in India, for issuing globally compatible unique identity codes (named as legal entity identifier or LEI) to entities which are parties to a financial transaction in India. Given the LEI initiative, efforts to facilitate LEI for legal entities involved in financial transactions across financial system needs to be expedited to maximise coverage over the medium term. 7) Given the complexity of some corporate entity with numerous subsidiaries including step down subsidiaries, there is a need for usage of LEI or similar methodology to link the complex hierarchy of any corporate may be considered to facilitate ease of identification of total credit exposure of corporate groups. While it is reported that LEI application of CCIL has provision for the same, the utility may need to effectively leveraged to map the corporate group hierarchy. 8) While presently LEI caters to legal entities involved in financial transactions, ultimately LEI or similar system needs to be made broad-based to incorporate other categories of customers like partnership firms and individuals. 9) For conduct of electronic transactions and reporting purposes in financial markets, well known international standards like ISO based standards can be considered where possible. 10) In order to take up data element/return standardisation through standardising or harmonising definitions, efforts of earlier working groups(Committee on rationalisation of returns and Committee on harmonisation of banking statistics) can be consolidated by setting up an inter-departmental project group within RBI which can work in a project mode so as to ensure comprehensive and effective implementation of standardisation and consistency of data element definitions across complete universe of returns/data requirements of RBI.

Chapter IV – Issues in data management and data quality in banks 4.1 Introduction With the advent of technology in banking, huge volume of data is produced and stored digitally. The transformation of banking in the form of anywhere/virtual banking has also resulted in increased information availability which necessitates banks to implement robust information management processes to facilitate effective Decision Support system. The ability of organizations to capture, manage, preserve and deliver the right information at the right time to the required personnel is one of the key success factors of Information Management. This chapter highlights various issues relating to data quality and challenges faced by banks in regard to data management and data quality. 4.1 Key issues relating to information management KPMG India’s Information Enabled Banking Survey (IEB) was conducted amongst select 10 private sector banks in South India during 2013. IEB was aimed to provide an overview of Information Management landscape across small and medium private sector banks. The various key issues highlighted in the survey included: Data Quality Only 33% of the respondents use automation in their monthly report generation process and 44% have minimal manual intervention so as to reduce Data Quality issues. Banks tend to collect information across multiple locations and multiple formats, thus potentially creating non standardized data. 67% of the respondents claimed to have clean and standardized data across systems. Standardization Standardization across business eliminates data duplication, data redundancy and cost associated with resolving these issues. All surveyed banks have standardized reporting formats at the local branch, region, zone and at the corporate level. Banks faced challenges in standardizing the report generation methodology despite having a standardized reporting form and clearly defined output. Banks use different technologies and databases to capture and store information. One of the key issues they may need to focus on is to address ‘multiple of truth’ scenarios to availability of ‘single version of truth’. Key observation was that standardization is needed at Extract, Transform and Load (ETL) process which would result in process efficiency, reduced manual intervention and reduced costs. 4.3 Data standards -Common issues in data and MIS in commercial banks Generally, various data quality and MIS related issues observed by RBI include: 1) Incomplete information and issues relating to fields in the core systems - Identifiers Missing or Invalid

- Incomplete or incorrect format of contact details like address, PIN code, contact numbers etc

- Date of Birth Missing

- Consumer Name Invalid/incomplete

- Ownership Indicator Invalid

- Date of Birth Invalid

- Ownership Indicator Missing

- Invalid status of accounts

- Non updation of accounts

- Non closure of accounts despite amount overdue and current balance being zero

- Incorrect entry of values/measures in respect of deposit or loan accounts

2) Issues with configuration of business products in the system 3) Incorrect activity or sector codes 4) No fields for capturing certain key fields which are either maintained manually or entered in ad-hoc or generic fields in the system 5) Data captured in electronic form that is not controlled (e.g. excel sheets or other desktop tools) 6) Though CBS was available, manual compilation of data from the branches 7) STP or near real time interface unavailable between various business systems and accounting systems 8) Incomplete master data and reference data 9) Deficiencies in mapping of data for assessing risks like liquidity risks All these factors could potentially impact business aspects like capital management and capital ratios, asset quality monitoring, funds and liquidity management ultimately impacting effective risk management. All these aspects indicate need for enhanced processes and procedures for data and information management in the bank and need for robust and standardized metadata. Committee identified various data related aspects relating in specific to credit area that would need to addressed to facilitate standardization across banking system. These are detailed at Annex III. 4.4 Key Drivers and challenges for the banking system The various new key drivers and challenges in the new milieu in banking include the following: (i) The regulatory environment in which banks in India are functioning is undergoing a paradigm shift. Apart from the basic approaches for handling major risk categories, Basel II further entails progressive advancement to sophisticated but complex risk measurement and management approaches to credit, market and operational risks depending on the size, sophistication and complexity of the respective banks. Some of the banks have applied to Reserve Bank of India for moving to Advanced Approaches of calculating Pillar I capital. (ii) In addition, Pillar 2 and Pillar 3 of Basel II emphasize the need for developing better risk management techniques in monitoring and managing risks not adequately covered or quantifiable under Pillar 1 and increased disclosure requirements. The banks are required to carry out Internal Capital Adequacy Assessment Process which comprises a bank’s procedures and measures designed to ensure appropriate identification and measurement of all risks to which it is exposed, an appropriate level of internal capital in relation to the bank’s risk profile and an application and further enhancement of risk management systems in the bank. (iii) Basel III Capital Regulations has commenced in India from April 1, 2013 and would be fully implemented as on March 31, 2019. There are various direct and related components of the Basel III framework like increasing quality and quantity of capital, enhancing liquidity risk management framework, leverage ratio, incentives for banks to clear standardised OTC derivatives contracts through qualified central counterparties, regulatory prescription for Domestic Systemically Important Banks and Countercyclical Capital buffer (CCCB) framework. (iv) The growing emphasis on fair treatment to customers calls for moving over from “Caveat Emptor”( Let the Buyer beware) to the principle “Caveat Venditor”(Let the seller beware) and focus on comprehensive consumer protection framework in financial sector in India. (v) Globally heightened regulatory requirements in respect of KYC / AML practices to prevent banks from being used, intentionally or unintentionally, by criminal elements for money laundering or terrorist financing activities. (vi) Extensive leverage of technology for internal processes and external delivery of services to customers requiring robust IT governance and Information security governance framework and processes in banks. (vii) In the background of growing volume of non - performing assets and restructured assets causing concern for the financial as well as the real sector in India, a framework for revitalizing distressed assets in the economy has been implemented with effect from April 1, 2014. The Framework lays down guidelines for early recognition of financial distress, information sharing among lenders and co-ordinated steps for prompt resolution and fair recovery for lenders. (viii) Impending developments in regulatory policies and economic environment are likely to result in banks facing a far more competitive environment in the coming years. As banks’ customers – both businesses and individuals - become global, banks will also need to keep pace with the customer demands and develop global ambitions. The challenge for banks will be to develop new products and delivery channels that meet the evolving needs and expectations of its customers. Thus, there is a need for effective information management practices and robust MIS. This calls for a robust data governance framework in banks.